Early American History

During the early twentieth century, Charles McLean Andrews (1863-1943), the most influential early American historian of his time, spent several years in British archives making detailed calendars of documents relating to the British colonies. (The Carnegie Institution sponsored this research.) These documents were then unknown in the United States. His “organized attack” on British archives resulted in the publication of three thick volumes (in 1908 and 1912), which listed hundreds of archival collections. The books covered not only the most obvious repositories—the Public Record Office and the British Library—but local repositories as well.

While teaching as a Fulbright lecturer at Nankai University, Tianjin, China, this past academic year, I engaged in a similar quest, for much the same reason—to introduce and make primary sources available to a new audience: in my case, Chinese scholars and students of early American history. Nankai University is a fabulous place for an American historian: it has a good library, excellent Web resources (“JSTOR,” “Early American Imprints,” and “Early American Newspapers” among them), and seven U.S. historians on staff! But Nankai is nearly unique among Chinese universities in this regard. Soon after arriving in Tianjin, I attended a conference on American history that Nankai sponsored. Talking to Chinese colleagues, I discovered that their universities have few printed primary sources on early America, much less subscriptions to expensive online collections. Many do not even subscribe to “JSTOR,” that critical online collection of journals. The databases Nankai does have barely served the interests of the graduate students in my classes.

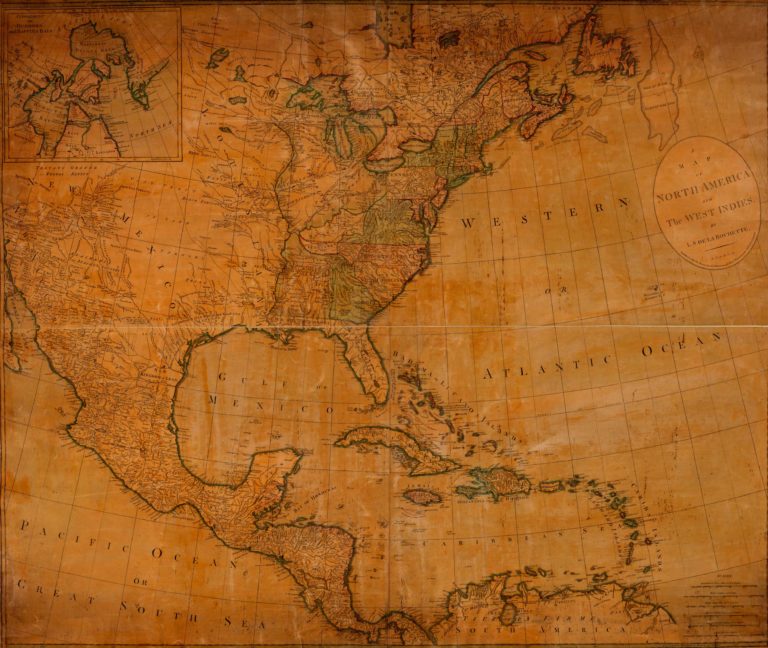

The Internet contains everything from newspapers and magazines to travel accounts, from maps to sheet music, from woodcuts to oil paintings, from novels to critical essays, from the proceedings of governmental bodies to the intimate details of family life.

To help students and faculty at Nankai and elsewhere in China, I began to compile a list of free, Web-based primary sources on early America (generously extended to 1877). I wrote short descriptions of each Website, analogous to the essays Andrews composed in his archive bibliographies. Since I thought the key resources were relatively few, I believed the project would take a day or two—but after several months I had compiled a list of more than 1300 free sites containing tens of thousands of digitized primary sources, most of which I had had no idea could be found online. Early America is, verily, free and on the Web!

The list is being prepared for the Web and will be hosted by Common-place; you should be able to use it within the next year. It will be valuable to anyone interested in our early past, from university professors writing books to high-school students searching for materials for term papers to Common-place readers who want to explore old or new historical interests. It covers every discipline in American studies: history, literature, law, art, music, science, medicine, politics, religion, economics, anthropology, sociology, demography.

What did I find? The Internet contains everything from newspapers and magazines to travel accounts, from maps to sheet music, from woodcuts to oil paintings, from novels to critical essays, from the proceedings of governmental bodies to the intimate details of family life. Searchers can find materials on every imaginable topic: Civil War hospitals; the Salem witchcraft trials; Revolutionary and Civil War battles; proceedings of the Continental Congress, the Constitutional Convention, and the U.S. Congress; slave resistance; Indian battles; the abolition and proslavery movements; the beliefs and religious practices of Evangelicals and Unitarians; the Lewis and Clark expedition; westward migration; economic development and immigration; and the writings of Cotton Mather and Walt Whitman, to name but a few. In sum, there are far more primary sources on the Web than in public libraries (except the greatest) and community college libraries, though many fewer than in the libraries of research universities.

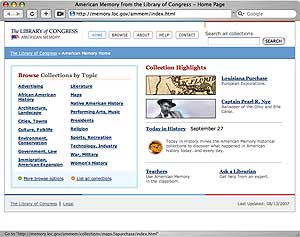

Hercule Poirot Searches the Internet

My Web bibliography grew as I gained proficiency in finding sources in obscure (and not-so-obscure) places. I began by looking at sites known by most U.S. historians—the Library of Congress’s American Memory sites; the Making of America site; Yale Law School’s Avalon Project site; and the Websites at universities noted for their commitment to digitizing U.S. history (Cornell, Michigan, Virginia, and Northern Illinois) and organizations whose digital databases I had used (Colonial Williamsburg, Maryland Archives). I went on to look at major cultural institutions like the Smithsonian Institution or Harvard, knowing that they had probably digitized something of interest. Google searches were trickier since Google looks for words, not concepts: putting primary sources in a search returns sites with those words but not necessarily sites with primary sources. Nonetheless, when all else failed, I tried Google searches. The sites these searches uncovered often contained primary sources or pointed the way (linked) to other sites and to detailed Web bibliographies (like Voice of the Shuttle or compilations of home pages of history museums). Following these links to additional linked sites, my list began to grow exponentially.

The Web is a vast puzzle that would trip up Sherlock Holmes or Hercule Poirot. I had to use all my historical skills to think like the folks who put digital materials on the Web. Many times I searched for sources I thought must be on the Web but came up empty. No obvious place proved fruitful. I often found what I was looking for when looking for something else. For example, I searched repeatedly for digital versions of Pennsylvania Archives, a multivolume collection of printed primary sources published from the mid-nineteenth to early twentieth centuries. But it appeared to be missing. I finally found a link to a free version of the Pennsylvania Archives when searching for Revolutionary War pension records at “footnote.com” (a subscription service geared to genealogists).

Other times, I had to find the right search terms. I knew, from a student paper completed for my Revolutionary-era course, that many Revolutionary War pension records had been digitized, mostly by genealogists. Because the veteran had to prove his service, the records often contained memories of enlistment, battles, camp life, and geographic movement after the war; widows who applied sometimes included thick social history details to prove their marriages. But repeated searches came up empty. Then, I changed the search terms from revolutionary war pension records to revolutionary war pension applications. That search reached a few digitized records. When I went to the root directory of those records, I discovered the USGenWeb Archives Pension Project: Revolutionary War, a collaborative genealogical project, which, as of this writing, contains transcripts (some just summaries) of over 1700 pension applications from all the states.

More often I figured out a strategy to uncover resources. After discovering that art museums often digitized their collections, I searched Websites of such institutions as the Metropolitan Museum of Art or the National Gallery; when I found an online index of art museums, I looked at all the listed institutions. I proceeded similarly when searching for documents about early universities. I had found several early university charters, records of boards of trustees, and catalogues. I figured that many others must be available on the Web. But I did not have a list of early colleges, particularly those begun in the post-Revolutionary decades. I discovered a 1962 article by Walter Eells from the History of Education Quarterly in “JSTOR” that reprinted a list the Connecticut Journal published in 1817, complete with founding dates of colleges. From there, I searched the libraries and archives of every listed institution (and their successor bodies) and discovered primary sources on fourteen of the thirty-five schools, including all but one college (Columbia University) founded in the colonial era.

Perseverance sometimes paid off. Much searching had uncovered few digital primary sources on the early history of Jews in the United States. Surely the “people of the book” wanted to document, on the Web, their historical presence (however small) in early America. Granted, several Jewish history museums digitized pictures of liturgical implements—but I could find few texts. I searched all the obvious places, including the archives of the three major Jewish theological seminaries (Hebrew Union, Jewish Theological, and Yeshiva). One last try uncovered the right search terms at the Jewish Theological Seminary, whose library has digitized a large collection of early newspaper clippings and one hundred pamphlets, a third published by 1870. Since I first accessed this database, the Jewish Theological Seminary has redesigned their Website and has not yet assigned a URL to these databases. Such are the perils of anyone constructing a Web bibliography!

The Common-place Web bibliography will always be a work in progress. Since broken links like those for the Jewish Theological Seminary site abound, users will be able to report them on the Website. I know, moreover, that I missed much good material. Web calendars of archival collections demonstrate, for instance, the existence of many local Roman Catholic materials, but I found none digitized. Neither online compilations of New England town records nor transcripts of Quaker meeting records appear to be on the Web, despite the vast numbers that survive. They may be hiding in cyberspace, waiting to be found. Users will be invited to send in URLs and short descriptions of new sites and to write reviews of any of the listed sites. That way, the bibliography will become a vital, living, and growing resource.

What Digitized Primary Sources Tell Us about History and Memory

I learned a great deal about history and memory in contemporary America while looking for Websites. I would like to share some of my discoveries with readers of Common-place. When one looks at the list as a whole, one sees a vision of the history of North America from the colonial era through Reconstruction similar to that of college-level survey text books. But the Web is not a place where resources float in ether; they grow from the concerted efforts of hundreds of institutions and thousands of individuals. Putting materials on the Web is a time-consuming process: they must be discovered, digitized, indexed, and uploaded. Historians, archivists, librarians, curators, genealogists, and institutions like the Library of Congress all put historical sources on the Web. These individuals and institutions have competing interests and hold widely contrasting views of American history. As one looks in detail at Web primary sources, one senses great conflict and contests over the meaning of our past, over the historical memories they wish to sustain or suppress. Who holds the keys to our history—historians, archivists, preachers, politicians, ordinary citizens?

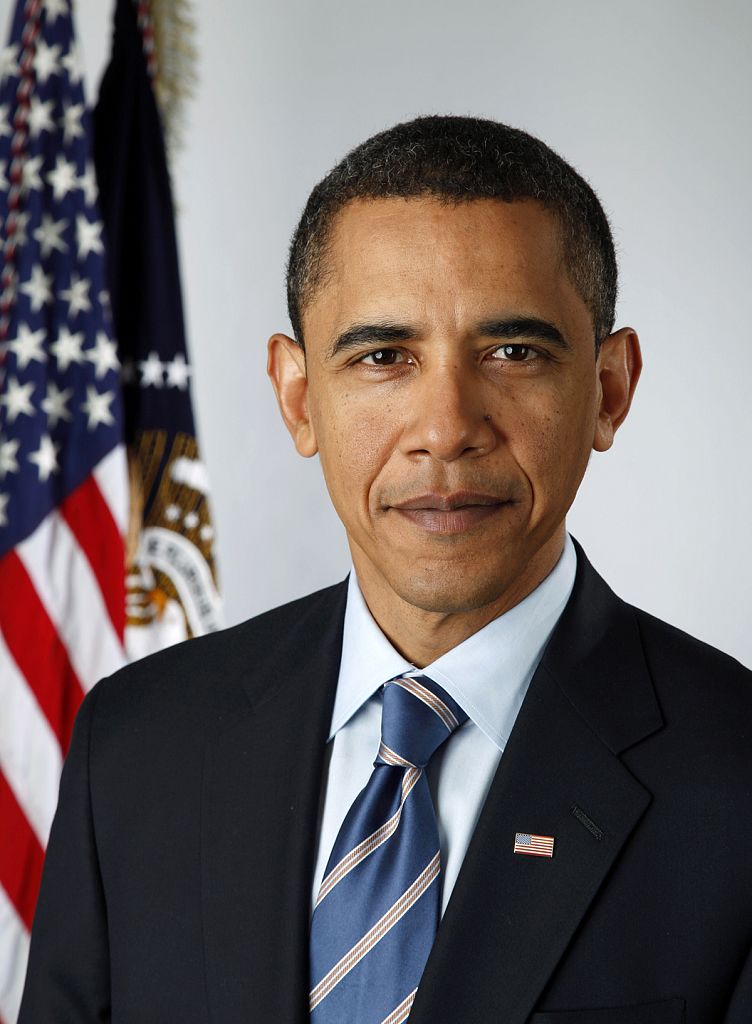

I did not begin my search with preconceived ideas about the type of history I would seek, and I looked in many areas (like history of science) about which I knew little. Nonetheless, the list in its totality resembles the agenda historians (particularly those influenced by the “new social history”) have followed over the past several decades. Web resources emphasize the social and cultural experience of ordinary people: their life stories, values, beliefs, politics, family relations. For instance, there are numerous writings on the Web by and about African Americans, Indians, and free white women. The proliferation of such sites reflects the urge (in the phrase of the 1960s) to do history “from the bottom up,” which, in turn, grew out of historians’ support of the civil rights and women’s movements. Other subfields of social history are also well represented: histories of urban places, popular culture, warfare, religious practice, popular politics (particularly political campaigns), and social reforms (abolition and the women’s movement).

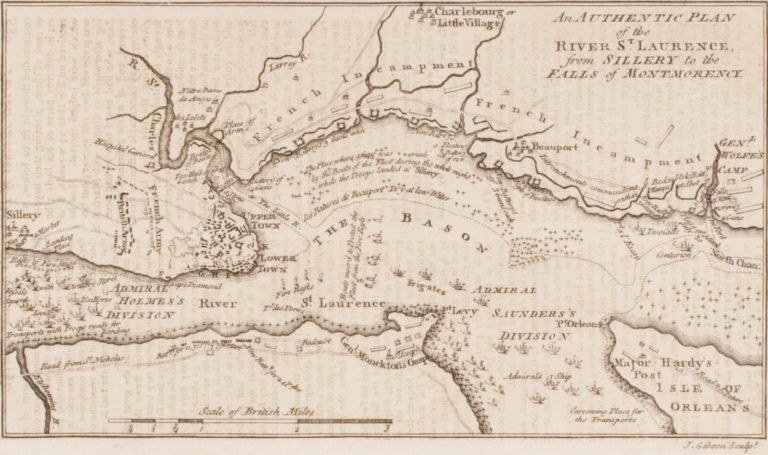

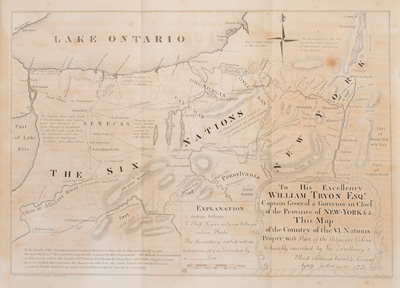

Historians have increasingly turned to images—paintings, popular art, woodcuts, objects, maps, photographs—to explain the worldview, daily life, and beliefs of the country’s rulers, ordinary folk, and subservient groups. (See, for instance, “Revolution in Print: Graphics in Nineteenth-Century America,” the April 2007 issue of Common-place.) The Web is crammed with images. Not only can users find digital copies of works by Copley, Stuart, Audubon, and the Hudson River School, but they can also find popular Currier and Ives prints as well as George Caleb Bingham’s political genre paintings. Thousands of early maps, starting in the sixteenth century, have been digitized. Searchers can find numerous photographs of Indian ceremonial objects and of African religious statues. Early photographs, particularly of the Civil War, are extraordinarily plentiful, including portraits, battle scenes, and cityscapes.

The “new social history,” so widely practiced by American historians, followed the interests of the French Annales school more than those of the British “people’s history” school, with its stress on class struggle. In its quest for the sources of social conflict, the latter tended to study workers, farm laborers, deviants (like criminals), and the poor. There are many fewer online primary sources about American farmers, deviants, and the poor (of whatever race) than about Indians or African Americans. British historians and archivists have placed far more primary materials about poverty and deviance on the Web than their American colleagues. Tellingly, materials on the Salem witches, the one thoroughly documented group of colonial deviants, provide rich materials about free women.

But surely one of the primary determinants of what ends up on the Web is less ideology than simply academic fashion. For example, quantifiable materials (probate inventories, population census manuscripts) are scarce. One can find samples of censuses and transcripts from Plymouth Colony and probate inventories from Virginia but few other local examples. Such absence can be traced to the decline of quantitative history. Several decades ago, historians published many studies using probate inventories and censuses, but, by and large, the historical guild abandoned quantification a decade or more before the rise of the Internet.

Similarly, much of what does end up on the Web does so less out of any sense of civic obligation or educational engagement than out of the specific needs of specific researchers. My own labors demonstrate this. I needed Peter Force’s voluminous and ill-indexed nine-volume set of documents from the pivotal years 1774-1776, called American Archives, for my work on farmers in the American Revolution. Since it is a set of great value to anyone studying the coming of the Revolutionary War, my colleagues at Northern Illinois University libraries and I were able to persuade the NEH to fund its digitization. These documents are now available at the American ArchivesWebsite.

Historians of science and medicine have placed extensive research materials on the Web. One can find medical texts (both European and American) and most of the works of world-famous scientists like Newton, Boyle, and Darwin on the Web. Early American scientists (though less well known than their European contemporaries) are well represented; works by Louis Agassiz and by Joseph Henry, for instance, have been digitized. Sites devoted to the social history of science and especially of medicine are more accessible to a general public: several sites examine Civil War hospitals and medicine or women doctors; those who want to learn about the work of a country physician can consult Patients’ Voices in Early Nineteenth Century Virginia, which includes letters to two Fredericksburg doctors, 1816-1830.

Many others, with interests and politics far different than those of most academic historians, have placed primary sources on the Web. Genealogists and social historians are often interested in the same records (census records, land records, tax lists, military records) but for different reasons: genealogists search for particular individuals; historians seek patterns and data. Many genealogists (or organized groups like ancestry.com) and the state archives where genealogists work have Websites indexing the names on those records. But these sites often have little original data and can rarely be used to compile numerical estimates (age of soldiers, fertility rates, or similar measures).

Evangelical Protestants, seeking to nurture believers and attract converts, have digitized Protestant Bibles and Reformation classics, including works by Luther, Calvin, and other reformers, in English translation (Christian Classics: Ethereal Library, for instance). Seeking a return to the purity of their colonial forefathers, orthodox Calvinists have placed many Puritan texts on the Web, with such titles as “Fire and Ice“. They have placed sermons of prominent early American preachers such as George Whitefield, Jonathan Edwards, John Wesley, Isaac Backus, Charles Finney, and Barton Stone and Thomas Campbell on the Web. Almost as if in response, mainline and liberal denominations have digitized their canonical texts (the Book of Common Prayer, Quaker testimonies, classic Unitarian and Universalist texts).

Ideological conservatives and libertarians, who believe history should emphasize the great achievements of great men, have placed the voluminous writings of the founding generation on the Web. The Liberty Fund, a libertarian group, has been particularly active; their Library of Libertyincludes hundreds of works of political philosophy, political theory, economics, and religion, with heavy coverage of the seventeenth and eighteenth centuries, including a big collection on the American Revolution. Ideologically similar Internet libraries (at American Colonists’ Library and founding.com) collect digital versions of books the founders read, from antiquity to their own time.

Americans of all political views consider the political thought and activities of the founding generation of great significance. Just as conservatives seek to preserve our revolutionary heritage and liberal historians seek to have the fullest possible historical record, ordinary Americans buy popular biographies of the founders by the hundreds of thousands. Understanding the popularity of the founders, the Library of Congress, through its “American Memory” collections, has invested heavily in creating digital versions of their works. That huge site includes digitized versions of manuscripts from Washington (and see also the Fitzpatrick edition of printed letters), Jefferson (and see also the Thomas Jefferson digital archive), and Madison in facsimile and sometimes typescript. (There are extensive collections of Franklin, John Adams, Hamilton, and Jay papers elsewhere). “American Memory” also includes digital editions of Max Farrand’s authoritative edition of the proceedings of the Constitutional Convention, Elliot’s debates on state ratification, and one of many online versions of the Federalist Papers.

What do digitized primary sources, as a whole, tell us about public interest and historical memory? A few individuals and social movements have garnered overwhelming support, across the social and political spectrum. Abraham Lincoln is the most popular earlier American denizen of the Web. Countless sites reprint his correspondence and speeches, reproduce digital images of his portrait, or document his life, assassination, and times. These sites come from a wide variety of places: the Lincoln papers at the Library of Congress; an edition of the Basler edition of Lincoln’s writings; Abraham Lincoln Net, with documents, images, and sounds concerning Lincoln’s Illinois years (including the Lincoln-Douglass debates); and an extraordinary number of portraits, political cartoons, and other images, many made possible by the spread of photographic technology (see, for instance, the Indiana Historical Society’s Lincoln collections.)

Both historians and the general public seek information about the most dramatic events in American history, no matter its historical significance. A site with primary sources on famous trials includes such high drama as the Salem witchcraft trial, the Burr conspiracy, the Amistad trials, the John Brown uprising at Harper’s Ferry, and the Andrew Johnson impeachment trial. Multiple sites cover the Salem trials, the Lewis and Clarke expedition, the overland trails, and the California gold rush.

It is also worth noting the heavy representation of amateur historians on the Web, particularly among sites devoted the Civil War. Many families still have Civil War records in their attics, and some of them (particularly those working on genealogies) transcribe letters and diaries and put them on the Web. Libraries and archives know that the Civil War fascinates their users, and institutions from coast to coast, from Duke to Bowling Green State to the University of Washington have digitized parts of their Civil War collections. Reenactors are more interested in the details of war and battles; they not only have their own sites but can find a complete digital set of the War of the Rebellion at Cornell’s Making of America site (go to browse monographs at bottom of page) along with innumerable muster rolls on the Web (three volumes from Maryland are digitized).

No longer can high school students (or their teachers), much less those in colleges here and abroad, complain about a lack of original sources on the first two and a half centuries of our history. Send students to the Web and watch term papers improve! Instead of bemoaning ordinary Americans’ ignorance of their past, members of Congress and their conservative allies should get busy and tell their constituents about Websites that preserve our heritage. Common-place is doing its part by sponsoring the Web bibliography, an announcement of which will appear in your in-box soon! The ball is now in your court!

This essay is dedicated to the memory of my Northern Illinois University colleague (and Common-placecoauthor) Tara Dirst, whose technical direction of the “Abraham Lincoln” and “American Archives” Websites have enriched all users of the Web, and to my Chinese colleagues (especially Zhang Juguo) and students (especially Dong Yu and Ye Fanmei) at Nankai University, without whom I would neither have conceived nor compiled my Web bibliography.

Further Reading:

Charles Andrews would feel right at home in the world of Web bibliographies. See his two books, Guide to the manuscript materials for the history of the United States to 1783 (Washington, D.C., 1908) and Guide to the materials for American history, to 1783, 2 vol. (Washington, D.C., 1912-14).

Readers can trace some of my detective work by following the Web searches I describe. The Eells article mentioned in the text is Walter Crosby Eells, “First Directory of American Colleges,” History of Education Quarterly 2 (Dec., 1962) and is available to those with access to “JSTOR.”

There is a great need for teaching and research guides to large Websites that highlight the most important resources contained in them. For examples of what can be done see the lesson plans at Northern Illinois University’s Lincoln/net. Common-place’s own “Tales from the Vault” occasionally highlights Web resources. See, for instance, Tara Dirst and Allan Kulikoff, “Was Dr. Benjamin Church a Traitor? A new way to find out,” in the October 2005 issue, and Mary Beth Norton, “Salem Witchcraft in the Classroom: With bewitching results,” in the January 2006 issue.

This article originally appeared in issue 8.1 (October, 2007).