Sense and Sympathy

Inspired by the advances in gender and women’s history, interest in the study of manhood has grown dramatically in the course of the last fifteen years. Scholars in a broad variety of fields have recognized that the construction of masculinities and femininities constitutes fundamental social and cultural processes. Caleb Crain’s stimulating American Sympathy: Men, Friendship, and Literature in the New Nation concentrates on one dimension of this process. Crain uses the diaries, letters, and essays written by three groups–a circle of young Philadelphians in the 1780s, Charles Brockden Brown and friends, and Ralph Emerson and the Transcendentalists–to understand how these men described, questioned, and mediated their friendship through writing, and what their words and acts can tell us about changing conceptions of manliness and acceptable manly behavior in the early republic.

In the late-eighteenth-century world “sympathy” denoted a cluster of feelings revolving around emotional closeness and empathy. In this regard sympathy appears to have had a more specific meaning than the more frequently used and now better known term “sensibility.” Crain establishes sympathy as a specific stage in the history of emotions: “At the height of sympathy’s reign, American men could express emotions to each other with a fervor and openness that could not have been detached from religious enthusiasm a generation earlier and would have to be consigned to sexual perversion a few generations later” (35). By no means paradoxically, the core of this book on compassion is framed by two executions: of John André, hanged as a British spy during the American Revolution, and of Billy Budd, the fictional sailor of Herman Melville’s last novel, hanged for instigating a mutiny at sea. André was the object of the American officers’ sympathy, not the least of the young Alexander Hamilton, whose account of André’s captivity and death was among sympathy’s earliest literary representations. Melville, in turn, described a maritime world in which one man’s feelings for another man could no longer be expressed openly.

Inevitably, questions over the character of these relationships quickly move into the foreground. Did the emotional bonds between men assume homoerotic or homosexual dimensions? Here American Sympathydeals with a historical topic that is notoriously difficult to recover and distorted by layers of presentist assumptions. Like previous scholars, such as Anthony Rotundo, Crain carefully and convincingly recreates a culture in which physical intimacy, such as sharing a bed and affection between men, did not translate into our understanding of sexual relations and homoeroticism. Ultimately, Crain considers “the question of did they or didn’t they somewhat irrelevant” (48). While some readers may consider this approach as a cop-out, Crain certainly takes into account what and how much can be said based on the available evidence. Instead, he concentrates on sympathy and its literary portrayals, “how a group of men in the United States wrote about what they felt for one another, and how in the process they created literature” (13).

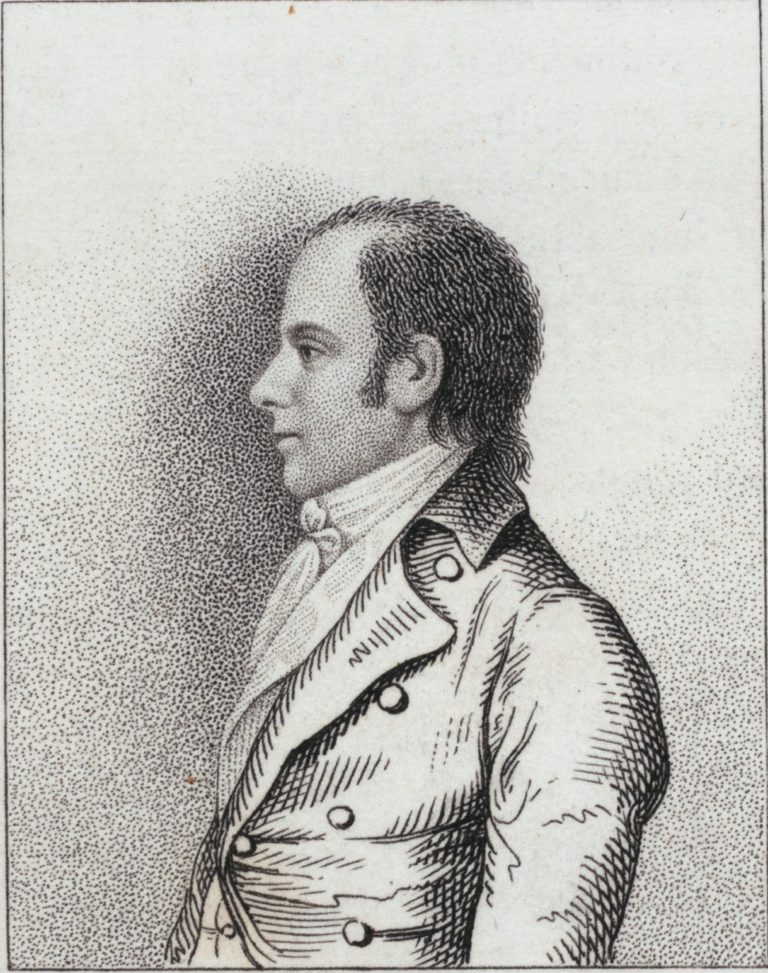

Crain begins with three Philadelphians who embraced a new understanding of friendship, free of colonial patronage relations, mercantile self-interest, and the duties of marriage. The diaries they kept for each other’s perusal reveal a complex mixture of intimacy and sincerity as well as frequent emotional testing and manipulation. A little later, Charles Brockden Brown went further in letters to his friends that blended disguise, invention, and dissimulation to test their sympathy–but disclosed little about Brown.

At the same time as Brown concealed his true feelings, he also tried to establish a sincere and “sympathetic identification” with his friends (72). Brown had laid out the irreducible prerequisites for a “romantic friendship”: “Between friends there must exist a perfect and entire similarity of disposition . . . Soul must be knit unto Soul” (66-67). As Brown and his circle of correspondents worked through the choices and tensions facing young men choosing a career in the 1790s, his high hopes clashed with reality. Initially Brown was torn between pursuing professional success and his literary interests. Interacting with his friends contributed to the slow and often torturous process of recognizing his calling as an author. However, different occupational choices–and Brown’s refusal to make a respectable choice–tore apart the close bonds with some of his correspondents.

In contrast, Elihu Hubbard Smith, a New York physician, scientist, and editor, not only supported Brown’s literary aspirations, but forced him to be sincere rather than evasive and pretending. Smith’s encouragement–brought to an end by his death in 1798–forced Brown to turn to writing his first fully developed pieces of fiction to exercise his imagination, rather than to spring more tales on his friends. Brown’s characters confronted authenticity and deception, sincerity and artifice, and the limited possibilities of achieving a lasting sympathetic connection to another human being. While Crain nowhere suggests that some of the major themes of Brown’s fiction were merely an extension of his life, he makes it thoroughly clear that they cannot be dissociated from the life.

Crain invokes Tocqueville to describe the “democratic sympathy” of the 1830s. Without the feudal ties of Europe that commanded allegiance and support, Americans stood alone, and adopted a “general compassion” for the plight of others that encompassed their neighbors as much as strangers. “But because democrats felt sympathy so promiscuously, their emotions were spread thin” (149). Crain, still following Tocqueville, credits the influence of a democratic public sphere, the power and omnipresence of print and association, in transforming American sympathy. This is the most explicit connection Crain makes between social and cultural developments and his history of sympathy. Emerson epitomizes the new public intellectual and professional writer suited to understand the changes in the affective economy. “Like Tocqueville, Emerson recognized that modern men resembled each other more and more but affected each other less and less” (151).

Emerson turned to literature as the only legitimate and safe means to write about love between men in a culture that increasingly stigmatized the private as well as public expression of such feelings. For Emerson, writing became not just the expression of desire, but the relationship itself: “The cultural logic that granted literature this dispensation to express homosexual sentiments, not permissible elsewhere in public, would coincide with the attraction that his literary exception must have had for men who felt tabooed homosexual sentiments in private” (152). In contrast to the Philadelphia diarists as well as Brown and his friends, Emerson’s personal letters became a substitute for having an “original relationship” with another man: for him, writing carried “not just the weight of recording a relationship but the weight of being a relationship” (173).

Crain establishes this pattern in two chapters on Emerson’s torturous crush on another Harvard student and on his relations with members of the Transcendentalist circle. Despite Emerson’s published remarks in “Friendship” that advocated the search for a soulmate and did not appear to distinguish homosexual from heterosexual relationships, he was much more effusive when describing his affection for men. Ultimately, he preferred to keep both men and women at an emotional distance, and would have preferred to see the Transcendentalists forever suspended in a variety of platonic rather than monogamous physical relations.

As a historian, I am of two minds about American Sympathy. In trying to present the book’s argument, I have focused on what it can tell us about the history of male friendship and emotions in the early republic. Consequently, I have imposed a perhaps too great coherence on Crain’s narrative. And I have given more weight to Tocqueville’s position in this story than Crain would probably find acceptable, and downplayed his rich and sophisticated literary analysis and occasionally dazzling explication of detail. My version does not credit many of the book’s subplots and approaches, including the use of psychoanalytic concepts in the chapter on Brown’s fiction, or Crain’s turn to Socrates and Virgil on homosexual desire to investigate the genealogy of Emerson’s poetry. Similarly, the chapter on the sources of Emerson’s “Friendship” essay uses Margaret Fuller as a foil, and is as much about Fuller as Emerson, and also deals in some detail with the romantic connections between Emerson’s male and female friends. Crain’s treatment of Melville’s Billy Budd opens with Oscar Wilde, then turns to the novel’s reception history, before comparing it with its adaptation in Benjamin Britten’s opera. None of this is irrelevant and, taken together, adds layer upon layer to our understanding of these writers.

But what can Crain’s reading of these writers tell us about American emotional history in this period? Even though Crain invokes Tocqueville and presents Emerson as particularly attuned to larger currents, he is reluctant to draw connections between his subjects and American culture, or to look elsewhere for further support of his interpretation. And Crain suggests that he is studying a distinct cultural formation, beginning during the American Revolution and fading away in the middle of the nineteenth century; but he left me looking for a fuller explanation of the causes of the rise and fall of sympathy. As a work of literary biography and analysis American Sympathy is compelling. As a work of history it has to claim Emerson as its main defender: “there is properly no history; only biography” (152).

Further Reading: The best historical monograph on American masculinity remains E. Anthony Rotundo, American Manhood: Transformations in Masculinity from the Revolution to the Modern Era (New York, 1993); more specifically see also Rotundo, “Romantic Friendship: Male Intimacy and Middle-Class Youth in The Northern United States, 1800-1900,” Journal of Social History 23 (1989): 1-25, and Anya Jabour, “Male Friendship and Masculinity in the Early National South: William Wirt and His Friends,” Journal of the Early Republic 20 (2000): 83-111. For additional background on the study of the history of manhood see John Tosh’s influential “What Should Historians Do With Masculinity? Reflections on Nineteenth-Century Britain,” History Workshop 38 (1994): 179-202, as well as Bryce Traister, “Academic Viagara: The Rise of American Masculinity Studies” American Quarterly 52 (2000): 274-304. For another example of the literary mediation of friendship see Lucia McMahon and Deborah Schriver, eds., To Read My Heart: The Journal of Rachel Van Dyke, 1810-1811 (Philadelphia, 2000). On similar literary communities see David S. Shields, Civil Tongues and Polite Letters in British America (Chapel Hill, 1997), and Catherine Kaplan’s forthcoming We, the Readers: Culture, Opposition, and Community in the New Nation. For an introduction to the history of emotions see Peter N. Stearns and Jan Lewis, eds., An Emotional History of the United States(New York, 1998).

This article originally appeared in issue 2.2 (January, 2002).

Albrecht Koschnik teaches early American history at Florida State University in Tallahassee. He is currently writing a book on young men, voluntary associations, and political culture in early republican Philadelphia, and most recently published “The Democratic Societies of Philadelphia and the Limits of the American Public Sphere, circa 1793-1795,” William and Mary Quarterly, 3d ser., 57 (July 2001): 615-36.